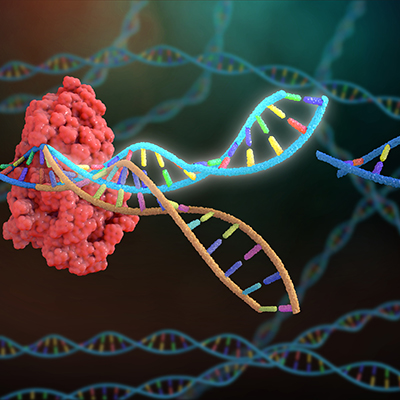

August 2, 2022 -- DeepMind and the European Molecular Biology Laboratory-European Bioinformatics Institute (EMBL-EBI) have used artificial intelligence (AI) to predict the 3D structures of nearly all cataloged proteins.

Following the project, the AlphaFold database, which DeepMind (a sibling company of Google) created with EMBL-EBI, contains more than 200 million protein structures, up from less than 1 million previously.

DeepMind and EMBL-EBI shared details last week of the AlphaFold expansion shortly after researchers at the Chan Zuckerberg Biohub published a paper on a deep-learning method for providing information on protein location and function within a cell, which they say could hasten research time for cell biologists and accelerate drug discovery and screening.

The DeepMind-EMBL-EBI project built on the July 2021 launch of the AlphaFold database. At launch, the database featured more than 350,000 protein structure predictions, including all human proteins. Later, the collaborators added 27 new proteomes, including 17 related to neglected tropical diseases.

Now, the partners have expanded the database to cover almost every protein sequence in the UniProt database, meaning it features almost every organism on Earth that has had its genome sequenced. The expanded database covers proteins made by plants, bacteria, animals, and other organisms that may be relevant to research into sustainability, food insecurity, and neglected diseases.

"We released AlphaFold in the hopes that other teams could learn from and build on the advances we made, and it has been exciting to see that happen so quickly. Many other AI research organizations have now entered the field and are building on AlphaFold's advances to create further breakthroughs. This is truly a new era in structural biology, and AI-based methods are going to drive incredible progress," John Jumper, research scientist and AlphaFold lead at DeepMind, said in a statement.

Separately, the Chan Zuckerberg Biohub project, details of which were published in Nature Methods, involved the development of a machine-learning method for the quantitative analysis and comparison of microscopy images of proteins with no prior knowledge.

Rather than train the algorithm by showing it examples, the researchers developed a system capable of supervising its own learning. Self-supervised learning reduces the manual work involved in setting up an algorithm and tackles the bias that can occur when humans pick the images used to teach the model. The self-supervised approach worked better than expected.

"The degree of detail in protein localization was way higher than we would've thought," Manuel Leonetti, PhD, co-corresponding author of the study and group leader at CZ Biohub, said in a statement. "The machine transforms each protein image into a mathematical vector. So then you can start ranking images that look the same. We realized that by doing that we could predict, with high specificity, proteins that work together in the cell just by comparing their images, which was kind of surprising."

Although there has been previous research on protein images using self-supervised or unsupervised models, the study's authors contend this is the first time self-supervised learning been used so successfully on such a large dataset of over 1 million images covering over 1,300 proteins measured from live human cells.

Copyright © 2022 scienceboard.net